Why A/B Testing Gets Complicated with Short URLs

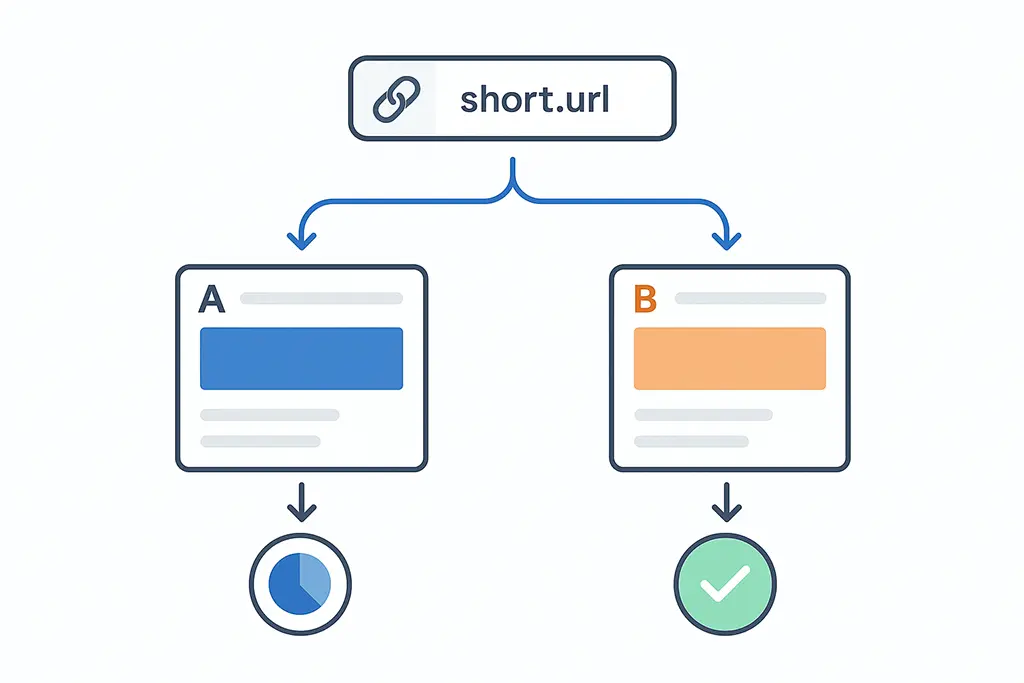

A/B testing is supposed to be simple. Two variants, one winner. But the moment short URLs and redirects get involved, that clarity quietly starts to unravel — and the frustrating part is that nothing looks broken on the surface. Your dashboard still fills with data. Statistical significance still arrives on schedule. The experiment just stops measuring what you think it's measuring.

I've seen teams spend weeks iterating on copy and button colors while the actual problem sat in a 301 redirect that was silently stripping UTM parameters and resetting sessions. Nobody suspects the redirect layer. That's what makes it dangerous.

This article focuses on one specific, underexplored problem: running A/B tests on landing pages that users reach through short URLs. Not the theory — the mechanics of where things actually break, why the breakage is invisible, and how to structure your setup so the results you get are results you can actually use.

The Hidden Journey Before Your Landing Page Loads

From the user's perspective, clicking a short link feels instant. One tap, one page. From a technical perspective, that click kicks off a chain of events that touches multiple systems before the landing page even begins to render.

Here's what typically happens: the user clicks a shortened URL, the request hits your redirect server, the server evaluates the destination (sometimes with conditional logic for device or geo), tracking parameters get appended or passed through, the browser follows the Location header, and only then does the final page load. Each of those steps is a failure point.

Redirects can alter the referrer header. Parameters can be silently dropped. Sessions can restart when crossing domains. And A/B testing tools — which depend on consistent session context to assign and maintain variants — can misclassify users in ways that never appear in error logs.

The dangerous part isn't that failures happen. It's that they happen silently. The experiment continues. Significance is reached. A decision gets made. And the underlying data was compromised from the first click.

How A/B Testing Tools Decide Who Sees What

Most experimentation platforms — whether you're using a dedicated tool or rolling your own with server-side logic — follow the same basic assignment pattern. When a user arrives, the system hashes some identifier (user ID, cookie, or session token) against the experiment configuration and assigns a variant. That assignment persists as long as the identifier persists.

The entire model depends on one assumption: the first pageview is stable and attributable. Short links attack that assumption directly. If the redirect resets the session, the assignment either gets regenerated (potentially different variant) or fails to happen at all. If the identifier changes mid-journey, the user might be counted twice under different variants.

In a particularly bad scenario, one variant appears to outperform another not because it converts better, but because its traffic happened to come from channels where cookies survive redirects more reliably. That's not a conversion win. That's a measurement artifact being mistaken for a product insight.

Why Redirect Timing Matters More Than You Think

One detail that rarely gets documented properly: where in the redirect chain does experiment assignment happen? This matters more than most teams realize.

If users are bucketed before the redirect fires, the redirect must preserve whatever identifier was used for assignment — typically a URL parameter or a cookie that your platform set. If users are bucketed after the redirect completes, the redirect must pass through enough context for the experiment logic to run correctly at the destination.

Problems accumulate when neither happens cleanly. A redirect that fires too early wipes the referrer. One that fires too late causes double-counting when analytics fires on both the intermediate and final pages. And redirects that behave differently across environments — iOS Safari vs. Android Chrome vs. desktop Firefox — can produce variant exposure skew that looks like a real treatment effect but isn't.

This is worth stress-testing explicitly. Don't assume your redirect behaves consistently across clients. Measure it.

Designing Experiments Around Short URLs

If short URLs are unavoidable — and in most email, SMS, or paid social campaigns, they are — the answer isn't to remove them. It's to design experiments that account for their behavior from the start.

The core principle: the short link should act as a pure routing layer. It forwards traffic. It doesn't branch, it doesn't modify attribution signals, and it doesn't make decisions based on user properties. All experiment logic lives at the destination.

When short links start doing more — geo-routing, device detection, conditional logic for different campaign audiences — they become part of your experiment whether you intended that or not. At that point you're no longer testing a landing page variant. You're testing a distributed system where inputs vary in ways you don't fully control.

Distributed systems are interesting to build. They produce terrible A/B test results.

Common A/B Testing Pitfalls with Redirected Traffic

Most failed experiments involving short URLs don't fail because of bad statistics. They fail because of hidden assumptions that nobody thought to verify.

One is the assumption that all traffic arrives at the landing page in the same technical state. In practice, traffic from messaging apps, email clients, in-app browsers, and social platforms behaves very differently during redirects. Some environments delay cookie writes past the point where assignment logic runs. Others block third-party scripts outright. Some restart sessions at every domain boundary. Each of these behaviors can bias variant exposure in ways that look like signal.

Another common pitfall: changing the redirect configuration during a live experiment. Teams sometimes update link destinations, add conditional routing, or switch from 301 to 302 (or vice versa) mid-test without realizing this can alter cache behavior and affect how many users see the redirect at all. Cached 301s are especially problematic — browsers won't re-request the URL, so users who already visited get permanently routed to whatever destination was cached.

The rule worth keeping: never change redirect behavior during a live experiment. If you need to update the destination URL, end the experiment, make the change, then restart.

Attribution vs Experiment Results: When Metrics Disagree

One of the most disorienting situations in short-link-driven testing is when your A/B test and your attribution reports tell different stories.

Variant B converts better in the experiment. But your attribution model shows fewer conversions credited to that campaign. Both numbers can be technically correct. They're measuring different things through different lenses, with the redirect sitting between them.

Experiments measure on-page behavior after arrival. Attribution models measure the full journey from click to conversion, including credit for the channel that drove the click. Short links sit at exactly the boundary between those two perspectives.

When a redirect drops UTM parameters, attribution loses the channel signal — clicks get credited to direct traffic or not credited at all. When a redirect restarts sessions, experiments lose continuity — the same user might be assigned different variants across page loads. These failures can happen asymmetrically across variants, which is how you end up with results that genuinely disagree rather than just measuring different things.

This is why careful teams treat experiment results as diagnostic signals with specific conditions attached, not as universal truths. The result is: "Variant B converted better, under these specific redirect conditions, for this traffic mix." That's useful. It's also more honest.

How to Structure Short Links for Reliable Experiments

The structural rules that make A/B testing survivable with redirects are straightforward, even if they require some discipline to enforce.

First, resolve to a single canonical destination during experiments. No branching inside the redirect layer. If variants need to differ, handle that at the landing page level — not at the routing layer. The short link points to one URL; the experiment logic there handles the split.

Second, pass query parameters faithfully. UTMs, experiment identifiers, attribution tags — all of them should survive the redirect without modification. A short link service that "cleans up" URLs by stripping parameters is destroying data that downstream systems depend on. Here's what that preservation looks like in a Spring Boot redirect handler:

@GetMapping("/{shortCode}")

public ResponseEntity<Void> redirect(

@PathVariable String shortCode,

@RequestParam MultiValueMap<String, String> queryParams,

HttpServletRequest request) {

String destination = linkService.resolve(shortCode);

if (destination == null) {

return ResponseEntity.notFound().build();

}

// Preserve all incoming query parameters on the destination

UriComponentsBuilder builder = UriComponentsBuilder.fromUriString(destination);

queryParams.forEach(builder::queryParam);

HttpHeaders headers = new HttpHeaders();

headers.setLocation(URI.create(builder.toUriString()));

// Use 302 during experiments — avoids browser caching the destination

return ResponseEntity.status(HttpStatus.FOUND).headers(headers).build();

}Note the 302 instead of 301. During an experiment, you want the redirect to be re-evaluated on each visit. A cached 301 means users who visited before the experiment started will skip the redirect entirely — they go straight to whatever destination was cached, bypassing your assignment logic.

Third, minimize redirect depth. Each additional hop increases the probability of session loss, referrer stripping, or inconsistent browser behavior. In testing scenarios, one redirect is tolerable. Two is already introducing risk. Three or more is a configuration problem, not a testing problem.

Here's a minimal Nginx configuration that ensures parameters survive and caching is suppressed for experiment URLs:

location ~* ^/e/(.+)$ {

# Experiment shortlinks — no caching, parameters preserved

add_header Cache-Control "no-store, no-cache, must-revalidate";

add_header Pragma "no-cache";

proxy_pass http://app_backend;

proxy_set_header X-Experiment-Traffic "true";

proxy_set_header X-Original-URI $request_uri;

}Finally: document the redirect logic. If someone on the team cannot walk through the full journey from short link to conversion event without opening source code, the system is already too complex to produce clean experiment results.

Interpreting Results Without Lying to Yourself

The most dangerous outcome of a contaminated A/B test isn't a failed experiment. It's a confident decision built on bad data — shipped, defended in a meeting, and used as evidence for the next six months of roadmap decisions.

Short URLs amplify this risk because the contamination layer is invisible. The experiment ran. Significance was reached. The variant shipped. Nobody knows the session discontinuity from a mid-chain domain crossing quietly biased the assignment distribution.

When results look surprisingly strong or strangely flat, the right instinct isn't celebration or panic. It's the question: did both variants experience the same redirect conditions?

Specifically, check: were UTM parameters preserved for both variants equally? Did both experience the same mix of traffic sources, with the same session behavior? If your experiment runs across multiple channels (email, SMS, paid social), was the variant distribution consistent within each channel — or did one variant get disproportionately more email traffic, where cookies survive better?

If you can't answer those questions cleanly, the honest interpretation might be: "We got data. We're not confident it measured what we intended." That's not failure. That's exactly the kind of epistemic honesty that prevents bad product decisions.

Practical Guardrails for Short-Link Tests

- Use 302 (temporary) redirects during experiments — not 301 — to prevent destination caching

- Pass all query parameters through without modification or rewriting

- Freeze redirect configuration for the entire duration of the experiment

- Run a smoke test in each traffic channel before launching, not just in a browser on your laptop

- Log the full redirect path (including intermediate hops) for at least a sample of traffic

Experiment Integrity Checklist

- Single canonical destination — no branching inside the redirect layer

- Variant assignment verified as consistent across page reloads and return visits

- Cross-domain analytics configured if the redirect crosses domain boundaries

- No additional tracking scripts injected at the redirect layer

- Attribution data cross-checked against experiment data before drawing conclusions

- Redirect behavior validated in iOS Safari, Android WebView, and desktop — not just Chrome

Conclusion: Test Pages, Not Assumptions

A/B testing landing pages linked from short URLs is absolutely achievable — but it requires treating the redirect layer as part of the experiment, not infrastructure that exists outside of it.

Short links are genuinely useful. They simplify sharing, enable cleaner campaign tracking, and make URLs manageable across channels. They also introduce a class of subtle, invisible failures that experiments weren't designed to handle by default.

When you structure redirects correctly — single destination, preserved parameters, 302 during tests, no mid-experiment changes — results become trustworthy. Not just numbers that reached significance, but data you can stand behind when someone asks where the evidence came from.

The fastest path to better conversion rates isn't smarter copy or bolder CTAs. It's experiments built on a foundation you've actually verified. Start with the redirect.